Introduction

The use of digital orthodontic setups has grown quickly. The purpose of this study was to test the interexaminer and intraexaminer reliabilities of 3-dimensional orthodontic digital setups in OrthoCAD (Align Technology, San Jose, Calif).

Methods

Six clinicians made digital orthodontic setups on 6 digital models twice, with a minimum interval of 2 weeks and a maximum interval of 4 weeks. OrthoCAD software was used, and treatment goals were all set the same according to the American Board of Orthodontics Objective Grading System (ABO-OGS). Differences between the 72 setups were measured with the ABO-OGS scores.

Results

In comparing setups 1 and 2, the intraexaminer mean absolute differences in total ABO-OGS scores varied statistically significantly between 2.17 and 6.00 points. Interexaminer mean absolute differences varied statistically significantly between 4.77 and 5.56 points. All but 1 intraclass correlation coefficient (ICC) value showed significant excellent agreement (ICC, >0.8) for intraexaminer reliability. One ICC value was insignificant and showed moderate (ICC, 0.4-0.6) agreement. Interexaminer reliability showed significant good (ICC, 0.6-0.8) agreement.

Conclusions

There is a significant difference in ABO-OGS score when using OrthoCAD. Although this difference was low, it could be clinically significant. Interexaminer and intraexaminer reliabilities are not redundant for general use of the 3-dimensional orthodontic digital setup in OrthoCAD.

Three-dimensional (3D) digital orthodontic models are more and more used and well established in the orthodontic specialty. They offer instant access and are easy to store, reproduce, communicate, or duplicate. Digital models may well replace plaster models in the future, since they show high validity and clinically acceptable differences between digital and plaster models for diagnosis, intra-arch and interarch measurements, treatment planning, and evaluation.

In addition to the previously described aspects of 3D digital or plaster models, an orthodontic setup can also be produced. The first manual setup guideline was published in 1946. More recently, a detailed setup guideline for plaster models and a book about the orthodontic setup with an extensive chapter on using digital models and making a setup more accessible and less time-consuming were published.

More software is now available—eg, OrthoCAD (Align Technology, San Jose, Calif), SureSmile (Orametrix, Richardson, Tex), and Orchestrate (Orchestrate, Rialto, Calif)—to create in-house digital setups that can be used for diagnosis, visualizing tooth-size discrepancies, treatment planning, indirect bonding, simulating treatment, and designing and producing orthodontic appliances. For instance, Invisalign (Align Technology) uses the digital setup for treatment planning and appliance manufacturing according to the orthodontist’s instructions. Subsequently the treatment plan, with the digital setup, is sent to the orthodontist for approval.

Numerous studies have been published on the use of digital setups. Only recently, Im et al validated the digital setup process by comparing the current gold standard of the manual tooth setup on plaster models with the digital setup.

Although Invisalign created a validated software tool that can be used to superimpose digital models using the palate as a stable reference to evaluate treatment outcomes in 3 dimensions, the reproducibility of the proposed treatment plan of Invisalign’s Virtual Orthodontic Technician is only subjectively assessed by the referring orthodontist according to his or her proficiency in using Align Technology’s Clincheck software, which allows the practitioner to accept or modify the treatment plan of tooth movements before the aligners are actually fabricated.

As with any diagnostic test, the most important criteria for any index are validity and reliability: ie, repeated measurements by the same or different raters yielding the same results.

Therefore, the aim of this study was to test the interexaminer and intraexaminer reliabilities of the 3D orthodontic digital setup in OrthoCAD. The null hypothesis was that there is no significant difference between the 2 digital setups of the same original models made by 1 clinician, or digital setups of the same original models made by different clinicians.

Material and methods

The sample consisted of 6 digital models selected from the database of a peripheral practice. All of these digital models were made with an iTero intraoral scanner (Align Technology) and imported into OrthoCAD with the option for digital setups activated. The following inclusion criteria were used: Class I or up to a maximum half premolar width Class II molar occlusion, slight to moderate crowding, and all permanent teeth present at least to the first molar. Exclusion criteria were severe deepbite or open bite, deciduous teeth, crossbite, extreme deep curve of Spee and curve of Wilson, ectopic teeth, extreme tooth wear, and severe tooth decay.

Original American Board of Orthodontics Objective Grading System (ABO-OGS) scores were computed for all 6 models by 1 clinician (L.N.J.F.) using the OrthoCAD measuring tool. Six copies were made from the 6 digital models and subsequently divided between 6 clinicians. All clinicians were faculty of the Department of Orthodontics at the Academic Centre for Dentistry Amsterdam, Amsterdam, The Netherlands. Three clinicians (numbers 4-6) were staff members with extensive clinical experience, and 3 (numbers 1-3) were postgraduate students with little clinical experience.

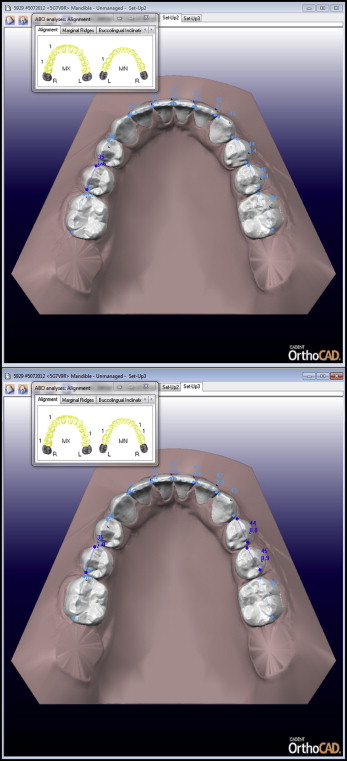

The clinicians were instructed on how to make digital setups with the OrthoCAD software ( Fig ) using the OrthoCAD instruction manual. Virtual treatment goals were described the same for each patient according to the ABO-OGS standards for dental casts, apart from root angulation, because that is generally not part of the digital setup. The following setup goals were set: (1) proper alignment; (2) marginal ridges at the same level; (3) buccolingual inclination of posterior teeth with minimal differences between the height of the buccal and lingual cusps; (4) occlusal relationship, with the buccal cusps of the maxillary molars, premolars, and canines aligning as close to the interproximal embrasures of the mandibular posterior teeth, and the mesiobuccal cusp of the maxillary first molar aligning close to the buccal groove of the mandibular first molar; (5) occlusal contacts, with the buccal cusps of the mandibular premolars and molars and the lingual cusps of the maxillary premolars and molars contacting the occlusal surfaces of the opposing teeth; (6) overjet: in the posterior region, the mandibular buccal cusps and maxillary lingual cusps used to determine proper positions within the fossae of the opposing arch; in the anterior region, the mandibular incisal edges in contact with the lingual surfaces of the maxillary anterior teeth; and (7) all spaces closed in the dental arches.

All 6 clinicians made digital setups twice for all 6 patients according to the described treatment goals, with a minimum interval of 2 weeks and a maximum interval of 4 weeks. Thus, each clinician made 12 setups, resulting in a total 72 setups in this project.

All second molars, if present, were excluded from the setup. Before the start of the project, a digital setup of another patient, matched to the inclusion criteria, was made for calibration.

In OrthoCAD, a digital setup is always produced with a virtual fixed orthodontic appliance. All digital setups were made with the same virtual Victory Series LP MBT 0.022-in brackets, the same virtual Victory Series MBT 0.022-in tubes (both, 3M Unitek, Monrovia, Calif), and the same virtual GAC Ideal wires (GAC/Dentsply, Bohemia, NY). These brackets and wires match the generally used fixed appliances in the clinic of the orthodontic department at the Academic Centre for Dentistry Amsterdam.

The ABO-OGS scores of the 72 setups were extracted from OrthoCAD; the original ABO-OGS score is automatically adjusted according to the changed tooth positions in the digital setup.

Statistical analysis

The total ABO-OGS scores from the 72 digital setups were entered in SPSS (version 20; IBM, Armonk, NY). One-sample t tests were used to evaluate differences between absolute differences in the ABO-OGS scores for setups 1 and 2, for each clinician, and between all 6 clinicians. Intraclass correlation coefficient (ICC) values were used to evaluate the total ABO-OGS scores for interexaminer and intraexaminer reliabilities, making use of the 2-way mixed model, single measures, consistency type, and a 95% confidence interval.

Results

Table I shows the mean absolute differences, standard deviations, standard errors, ranges, t values, and degrees of freedom in the total ABO-OGS scores between setups 1 and 2 for each clinician. In addition, the results of the 1-sample t tests are given of the comparison of the mean differences in total ABO-OGS scores for each clinician between setups 1 and 2 with 0. All mean differences were statistically significantly higher than 0. The intraexaminer mean absolute differences varied between 2.17 and 6.00 points ( P ≤ 0.05). The intraexaminer range varied between 0 and 10 points, and the standard deviations were between 1.47 and 2.02. Clinicians 1 and 3 showed relatively high absolute differences and standard deviations.

| Clinician | M | SD | SE | Range | t | df | P |

|---|---|---|---|---|---|---|---|

| 1 | 5.50 | 3.02 | 1.23 | 2-10 | 4.47 | 5 | 0.007 † |

| 2 | 2.17 | 1.47 | 0.60 | 1-4 | 3.61 | 5 | 0.015 ∗ |

| 3 | 6.00 | 2.53 | 1.03 | 2-10 | 5.81 | 5 | 0.002 † |

| 4 | 2.83 | 1.47 | 0.60 | 1-5 | 4.72 | 5 | 0.005 † |

| 5 | 3.00 | 2.90 | 1.18 | 1-8 | 2.54 | 5 | 0.050 ∗ |

| 6 | 3.33 | 2.42 | 0.99 | 0-6 | 3.37 | 5 | 0.020 ∗ |

Table II shows the mean absolute differences, standard deviations, standard errors, ranges, t values, and degrees of freedom in the total ABO-OGS scores between all clinicians for setups 1 and 2. In addition, the results of the 1-sample t tests are given for the comparison of the mean differences in total ABO-OGS scores between all clinicians for setups 1 and 2 with 0. All mean differences were statistically significantly higher than 0. The interexaminer mean absolute differences varied between 4.77 and 5.56 points ( P <0.05). The interexaminer range varied between 0 and 22 points, and the standard deviations were between 2.30 and 2.96.

| Setup | M | SD | SE | Range | t | df | P |

|---|---|---|---|---|---|---|---|

| Setup 1 | 5.56 | 2.30 | 0.94 | 0-17 | 5.91 | 5 | 0.002 † |

| Setup 2 | 4.77 | 2.96 | 1.21 | 0-22 | 3.95 | 5 | 0.011 ∗ |

Tables III and IV show the intraexaminer and interexaminer reliabilities, respectively. All but 1 ICC value showed significant excellent agreement (ICC, >0.8) for intraexaminer reliability ( P <0.01). Only 1 value (clinician 1) was insignificant and showed moderate (ICC, 0.4-0.6) agreement ( Table III ). Interexaminer reliability showed significant good (ICC, 0.6-0.8) agreement ( P <0.01, Table IV ).

Stay updated, free dental videos. Join our Telegram channel

VIDEdental - Online dental courses