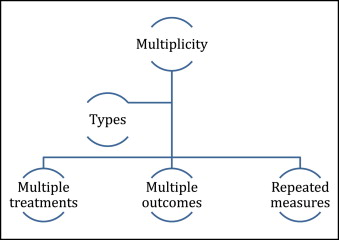

In the previous article, I discussed subgroup analyses. In this article and the next, we will consider other aspects of clinical trials that lead to multiplicity of data when multiple hypotheses are tested at the same time. In particular, I will discuss trials with multiple outcome end points, which are a combination of individual events, more than 2 treatment groups, and data collected from repeated measurements over time ( Figure ).

If we have multiple treatments—eg, a 3-arm trial comparing conventional brackets, passive self-ligating brackets, and active self-ligating brackets—there are several possible comparisons, as shown in the Table .

| Comparisons |

|---|

| CB vs pSLB |

| CB vs aSLB |

| pSLB vs aSLB |

| CB vs pSLB and aSLB |

| pSLB vs CB and aSLB |

| aSLB vs CB and pSLB |

| CB vs pSLB and aSLB |

The main issues with multiple treatments are related to the following.

- 1.

Required sample size. The ability to detect important effects depends on the sample size per group; as the number of trial arms increases, so does the number of patients needed. Therefore, the more treatments under investigation, the more subjects are needed. Would it be feasible to recruit the number of patients required for a multi-arm trial?

- 2.

Multiple comparisons. As the number of treatments increases, so does the number of potential comparisons to be made, as shown in the Table . This can lead to problems of interpretation associated with multiple comparisons and spurious findings that were explained in the previous article.

The best approach to multiple treatments is to develop a prespecified analysis plan. In the example above, a limited number of comparisons should be made instead of all possible comparisons shown in the Table .

In trials with 2 or more experimental treatments and a standard control group, the main purpose is often to assess whether there is any benefit of the treatments compared with the standard approach. It might therefore be appropriate to compare each experimental treatment directly with the control group rather than with each other. It is important here also to understand that, if both interventions are effective, a direct comparison between the 2 interventions will require a larger sample size than the comparison with the control, since the differences between treatments are expected to be smaller compared with the differences between the treatments and the control. Please refer to previous articles in this column on sample calculations.

This is an important point, and I shall give an example to further clarify the issue. Let us assume that we have mixed dentition patients with posterior crossbites randomized into 3 groups: rapid palatal expander, quad-helix, and no therapy. The outcome is whether there is a crossbite at the end of the study (permanent dentition and after appliance removal, when applicable).

If we assume that both expanders are effective, the difference in the expected effect on correction and the maintenance of crossbite correction during the permanent dentition stage between expander groups will be smaller compared with the difference in the expected effect on correction and maintenance of crossbites during the permanent dentition stage between any expander and the control (no therapy). Depending on the question we seek to answer, our design and sample calculation should be adjusted accordingly.

If we would like to compare the effects of the expanders with no therapy, we could perform 2 tests—rapid palatal expander vs no treatment, and quad-helix vs no treatment. If the objective of the trial were to compare the effectiveness of the 2 expanders, then a direct comparison of rapid palatal expander vs quad-helix would be required. The latter comparison is most likely to need more patients to achieve the required power to detect clinically important differences compared with the 2 former comparisons. The reason is that the expected difference in effect is likely to be smaller between the rapid palatal expander and the quad-helix compared with no treatment. Therefore, it is important to consider this effect at the design stage instead of performing many multi-directional comparison tests that have low power and also could give false-positive results.

Another approach would be to perform a global test for the 3 groups (rapid palatal expander vs quad-helix vs no treatment); however, if a statistically significant result were found, we must resort to post hoc pair-wise comparisons, which suffer from the subgroup analysis problems discussed in the previous article.

- 3.

Multiple outcomes. Trials often use multiple end points. For example, in orthodontics, we might use multiple end points to assess the degree of malocclusion and the quality of orthodontic therapy results. For example, the discrepancy index and the scoring method of finished cases used by the American Board of Orthodontics include information from several measurements on dental casts and radiographs. There are several approaches to dealing with such multiple end points, depending on the nature of the outcomes being measured.

We could prespecify the primary and secondary end points. In assessing the quality of orthodontic treatment results or successful impacted canine eruption, we can select in advance a small number of outcomes that we consider to have the utmost importance. For example, for quality of treatment results, primary outcomes might include Class I canine relationship and full alignment of the dentition, and, for canine eruption, emergence into the oral cavity. Other relevant outcomes such as canine directional changes could be considered; however, primary end points and any secondary outcomes to be analyzed must be prespecified. Caution should be exercised not to select many correlated outcomes that can cause multiplicity and interpretation issues.

We could choose a combined end point. Combined end points might apply in an evaluation of orthodontic treatment results and especially when it is difficult to recruit enough patients to observe the desired outcome.

The peer assessment rating index (PAR) is a good example for a combined end point used to assess orthodontic treatment quality. Instead of comparing multiple correlated outcomes recorded from the same patients, 1 combined measurement is used. Similarly, the American Board of Orthodontics discrepancy index combines into 1 end point several parameters that indicate the degree of malocclusion.

This approach increases the study power and hence makes it easier to detect real treatment effects and provides a simple summary of several outcomes. However, a problem could arise because the included outcomes on the combined end point are given equal importance.

In orthodontics, multiple outcomes pose a problem in cephalometric comparisons in which several similar and often-correlated measurements are statistically compared. This method invokes the multiple comparison problem and is likely to give spurious results. Multiple comparisons combined with the fact that interpretation is often based solely on P values might give the wrong impressions of treatment effects. An overall combined end point or, even better, a combined end point per area of interest (ie, maxilla, mandible, and dentition) in assessing treatment effectiveness would be a more reasonable approach.

We can correct the P value to allow for multiple testing. As the number tests increases, so does the risk of a false-positive result. The Bonferroni correction in which we increase the significance threshold from 0.05 to a lower value depending on the number of intended comparisons was discussed in the previous article on subgroup analyses. This approach suffers from limitations: since all outcomes have equal weight, the adjustment is too conservative because it reduces the power of the study (makes it harder to detect important differences) and assumes that the outcomes are not correlated.

The next article will discuss repeated measurements in the context of multiplicity.

Conclusions

- 1.

Multiple treatment trials should be planned carefully and analyzed appropriately.

- 2.

Methods for dealing with multiple outcomes can include combined summary end points, P value adjustments, and prespecification of primary and secondary outcomes.

- 3.

If there were a true difference between the comparison groups, multiple (subgroup) testing might also increase the chance of finding nonsignificant results (type II errors) because of loss of power. However, researchers are usually looking for a significant result, and the more likely problem is that a significant finding is reported when it is found, even if this were due to chance.

Stay updated, free dental videos. Join our Telegram channel

VIDEdental - Online dental courses