The medical literature shows that inadequate monitoring contributes significantly to anesthesia-related morbidity and mortality; analyses demonstrate that most complications could have been prevented with better monitoring. Although errors in patient management are inevitable, the timely detection of adverse physiologic changes through monitoring can enable practitioners to take action to prevent these errors from harming the patient.

Monitoring during anesthesia encompasses continuous observation and integration of all incoming information. Early recognition of changing signs and symptoms and evaluation of responses to therapeutic interventions enable us to provide safe and effective patient care. Advances in technology have made it possible to combine the information provided by instrumental monitors with that provided by our senses so that we can increase the safety of anesthesia delivery to our patients. Equal in importance to the actual parameters measured is the understanding of how monitors work, the information they provide, and the interpretation of that information to properly assess the patient.

Ideal monitors should be accurate, convenient, inexpensive, reliable, continuous, and noninvasive. During anesthesia, monitors primarily evaluate the circulatory, respiratory, central nervous, neuromuscular, and renal systems. Monitoring may be classified as either routine or specialized. This chapter will focus on the basic routine monitoring of patients undergoing anesthesia. The following definitions, approved by the House of Delegates at the 2007 Annual Meeting of the American Dental Association, explain concepts that are important for an understanding of the basis of anesthesia delivery to patients undergoing oral and maxillofacial surgery in the office, outpatient, and inpatient settings.

▪

DEFINITIONS

Minimal sedation: a minimally depressed level of consciousness, produced by a pharmacologic method, that retains the patient’s ability to independently and continuously maintain an airway and respond normally to tactile stimulation and verbal command. Although cognitive function and coordination may be modestly impaired, ventilatory and cardiovascular functions are unaffected.

Conscious sedation: a drug-induced depression of consciousness during which patients respond purposefully to verbal commands, either alone or accompanied by light tactile stimulation. No interventions are required to maintain a patent airway, and spontaneous ventilation is adequate. Cardiovascular function is usually maintained.

Deep sedation: a drug-induced depression of consciousness during which patients cannot be easily aroused but respond purposefully following repeated or painful stimulation. The ability to independently maintain ventilatory function may be impaired. Patients may require assistance in maintaining a patent airway, and spontaneous ventilation may be inadequate. Cardiovascular function is usually maintained.

General anesthesia: a drug-induced loss of consciousness during which patients are not arousable, even by painful stimulation. The ability to independently maintain ventilatory function is often impaired. Patients often require assistance in maintaining a patent airway, and positive pressure ventilation may be required because of depressed spontaneous ventilation or drug-induced depression of neuromuscular function. Cardiovascular function may be impaired.

Continual: repeated regularly and frequently in steady succession.

Continuous: prolonged without any interruption at any time.

Time-oriented anesthesia record: documentation at appropriate time intervals of drugs, doses, and physiologic data obtained during patient monitoring.

▪

HISTORY AND EVOLUTION OF MONITORING GUIDELINES

“Practice guidelines” are devised by a team of experts and provide recommendations that are based on analysis of the current literature with the goal of aiding the practitioner in performing patient care. Such guidelines are not considered requirements or standards of care. “Standards of care” specify appropriate treatment protocols for a typical patient or procedure but are still not hard-and-fast rules, and there may be deviation under exceptional circumstances. “Requirements” are obligatory codes of conduct or procedures that are deemed mandatory by the relevant governing body. In the practice of oral and maxillofacial surgery, most monitoring protocols are defined either by guidelines from the specialty or from state boards of dentistry.

The first detailed mandatory standards for monitoring during anesthesia were the Harvard minimal intraoperative monitoring standards. Devised in 1985, these guidelines were created in response to anesthesia-related incidents that were considered to have been preventable if there had been better intraoperative monitoring. The guidelines stressed continuous monitoring of ventilation and circulation. In 1986 the American Society of Anesthesiologists’ (ASA) committee on standards of care developed standards for basic intraoperative monitoring, based primarily on the Harvard standards, that stressed quantitative versus qualitative measurements. The ASA monitoring standards became widely accepted by the anesthesia community. This acceptance prompted the American Dental Society of Anesthesiology (ADSA) to create detailed guidelines for intraoperative monitoring in the dental setting. In 1991 the ADSA established its guidelines for intraoperative monitoring of patients undergoing conscious sedation, deep sedation, and general anesthesia ( Box 2-1 ). These guidelines applied to all nonregional dental anesthesia care and considered sedation and delivery of anesthesia in the dental office setting. The ADSA guidelines combined the Harvard and ASA standards; they defined which methods of monitoring should be continually or continuously used and maintained a focus on oxygenation, ventilation, and circulation. The intent was to unify standards throughout dentistry and to incorporate the guidelines used within the dental specialties of periodontology, oral and maxillofacial surgery, and pediatric dentistry. In 1995 the ASA created a task force to develop recommendations for practioners who are not anesthesiologists but who perform therapeutic procedures involving moderate and deep sedation. The ASA adopted guidelines for sedation and analgesia by nonanesthesiologists, and in 2002 the ASA task force updated these guidelines. These practice guidelines, however, do not apply to patients receiving minimal sedation or general anesthesia.

Standard I: Qualified Personnel

Qualified personnel shall be present in the operating room during the anesthesia period.

Objectives

- 1.

During conscious sedation, a minimum of two qualified persons (e.g., doctor and assistant trained to monitor appropriate physiologic parameters) should be present.

- 2.

Because deep sedation and general anesthesia are often indistinguishable entities with regard to the levels of consciousness or unconsciousness, a minimum of three qualified persons must be present during deep sedation and general anesthesia. There should be one person whose sole responsibility is monitoring and recording vital signs continually. This person may be classified as an anesthesia assistant, anesthesia technician, nurse, physician, or dentist.

- 3.

In the event of special circumstances (e.g., an emergency in another location, radiation exposure to personnel), a modification in the number of personnel present may be made according to the best judgment of the clinician responsible for the patient under anesthesia. However, at no time should the monitoring of the patient be interrupted.

Standard II: Oxygenation

During the anesthesia period, the oxygenation of the patient shall be continually evaluated and ensured.

Objective

Adequate oxygen concentration must be delivered through inspired gases to be delivered to the body tissues.

Methods

Inspired gas . Fail-safe mechanisms (e.g., automatic nitrous oxide turnoff) must be used on delivery systems before the entry of the gas mixture to the patient’s respiratory system. If an anesthesia machine that is capable of delivering more than 80% nitrous oxide (i.e., <20% oxygen) is used, then low-oxygen alarms and oxygen analyzers should be used.

Blood oxygenation . The color of mucosa, skin, or blood should be evaluated on a continual basis. In certain circumstances (e.g., deep sedation, general anesthesia), mechanical monitors should be used to supplement clinical signs. Pulse oximetry is strongly encouraged during deep sedation and general anesthesia, especially in pediatric patients.

Standard III: Ventilation

During the anesthesia period, the ventilation of the patient shall be continually evaluated. When inhalation agents other than nitrous oxide are used, continuous observation of the patient is required.

Objective

The exchange of oxygen and carbon dioxide from the lungs must be adequately maintained.

Methods

- 1.

During conscious sedation, clinical signs including chest excursion, auscultation of breath sounds, and movement of the reservoir bag on the gas machine (except when a nasal cannula is being used) should be continually monitored. Auscultation of breath sounds can be performed by a precordial or suprasternal stethoscope.

- 2.

During deep sedation and general anesthesia, clinical signs including chest excursion, auscultation of breath sounds, and movement of the reservoir bag on the gas machine must be continuously monitored.

- 3.

During endotracheal anesthesia, breath sounds and chest excursion must be verified after intubation and monitored continually. The use of capnography to measure carbon dioxide levels is encouraged.

Standard IV: Circulation

During the anesthesia period, the circulation and its related organ (e.g., heart) should be evaluated.

Objectives

Adequate perfusion of blood must be maintained to permit the exchange of oxygen from the blood to the tissues and carbon dioxide from the tissue to the blood.

Methods

- 1.

When conscious sedation is being used, a blood pressure reading should be made before its use and after its use before discharge.

- 2.

A blood pressure device must be used to continually monitor systolic and diastolic pressure during deep sedation, and the pulse and blood pressure should be properly recorded at regular intervals during deep sedation and general anesthesia.

- 3.

The ECG should be used to continuously display cardiac rhythm during deep sedation and must be used during general anesthesia throughout the anesthesia period.

Standard V: Body Temperature

During the anesthesia period, the patient’s body temperature may need to be evaluated.

Objective

Body temperature should be maintained at or as near to normal as possible. Certain types of anesthetic agents are more commonly associated with excessive body temperature changes. Low body temperatures, although generally less likely to develop during dental or office-type anesthesia, may cause a delay in drug metabolism and patient recovery. High body temperatures may cause a hypermetabolic state and increase oxygen consumption.

Methods

- 1.

An enteral or transcutaneous device should be readily available to monitor body temperature during or after general anesthesia.

- 2.

During general anesthesia, when anesthetic agents that are frequently implicated in malignant hyperthermia (e.g., depolarizing muscle relaxants and volatile gaseous agents) are used, monitoring body temperature continually is encouraged.

CIRCULATORY SYSTEM MONITORING

Intraoperative monitoring of the circulatory system is performed with continuous electrocardiography, pulse oximetry, and measurement of arterial blood pressure and heart rate.

Blood Pressure Monitor

Large intraoperative swings in blood pressure can occur rapidly and can contribute to perioperative morbidity. Particularly alarming is rapid onset hypotension following drug administration because it is a well-known antecedent to cardiac arrest. During acute hypotension, myocardial wall tension decreases, thereby decreasing oxygen demand; however, coronary artery perfusion diminishes to an even greater extent, putting the patient at risk of cardiac morbidity. Hypertension decreases coronary blood flow by increasing myocardial wall tension, thereby creating increased demand for and consumption of oxygen. Either extreme increases the risk of cardiac ischemia with possible adverse outcome. In addition to cardiac perfusion, cerebral and renal perfusion must also be maintained if patient morbidity is to be prevented, and these can be affected by wide swings in blood pressure. It would be ideal to routinely monitor blood pressure continuously to prevent hemodynamic events; however, the most widely used method of continuous measurement, an indwelling arterial catheter, is invasive. In the outpatient clinic, the most common methods of blood pressure recording are noninvasive and intermittent with various automated methods of measurement replacing manual methods.

In traditional sphygmomanometry, the observer detects Korotkoff’s sounds by stethoscope upon release of a cuff-occluded brachial artery. The pressure recording at the first sound is referred to as the systolic blood pressure. The diastolic pressure is recorded as the point at which the sounds cease upon further deflation of the cuff. Several factors affect the accuracy of measurement. The relationship between cuff size and arm circumference is the most important determinant of accurate blood pressure readings. In the outpatient clinic, the most common blood pressure measuring error (84% of errors) is miscuffing, specifically, using small cuffs on large arms. The proper cuff length should be 80% of arm circumference, and the proper cuff width, at least 40% of arm circumference. Undersized cuffs can cause falsely high readings, whereas oversized cuffs cause falsely low readings; however, because the error caused by an oversized cuff is smaller than that caused by an undersized cuff, a larger cuff is preferable.

The site of placement is also important. As the site of monitor placement becomes more distal, the systolic pressure increases while the diastolic decreases. For patients with peripheral vascular disease, it is important to measure the pressure as proximal as possible because peripheral sites may give erroneously low pressure readings.

The position of the arm is a very important determinant of blood pressure measurements. The cuff should be at the same level as the patient’s heart; otherwise hydrostatic pressure will create an error in the measurement. For every 10 cm above or below the level of the heart, 7.5 mm Hg must be added or subtracted from the reading. For example, if the upper arm is below the level of the right atrium (as it is when it is hanging down while the patient is seated), the reading will be falsely elevated. Similarly, if the arm is above the level of the heart, the reading will be falsely low. The reading will also be high if isometric effects are involved, as when the patient actively holds the arm up instead of allowing the arm to be passively supported or if the patient is positioned so that the back is unsupported or the legs are dangling.

Most of the automated noninvasive blood pressure monitors in use today determine blood pressure measurements by detecting a sequence of oscillations in cuff pressure using a pressure transducer. The cuff is inflated by an air pump to a predetermined pressure that is held while pressure pulsations are still present in the arterial wall. These oscillations are transmitted through the cuff to the transducer, and this pressure becomes the point at which the systolic blood pressure reading is determined. Cuff pressure increases until no pulsations (oscillations) are detected; then the cuff pressure is released and blood starts to flow again in the occluded artery. The pressure oscillations are once again transmitted through the cuff, and this becomes the point for diastolic pressure determination. The monitor measures the magnitudes of these oscillations and compares them against algorithms to determine measurements for systolic, diastolic, and mean pressures.

The advantages of automated blood pressure monitors are ease of use, safety, and freedom from operator bias; in addition, accurate placement over an artery is not as crucial as with traditional sphygmomanometers. The automated monitors work when peripheral vasoconstriction is present and, unlike traditional mercury-gravity manometers, do not need to allow time for venous drainage to obtain accurate measurements. They also work in very noisy environments and are not sensitive to electrosurgical interference. The complications and limitations associated with the use of automatic arterial blood pressure monitors include the risk of compartment syndrome and nerve palsies because of repeated inflation and device-related failures, such as the inability of the monitor to detect blood pressures in patients with dysrhythmias. Automatic blood pressure monitors may also contribute to loss of vigilance by the anesthetist.

Although widely used only in research, noninvasive continuous blood pressure monitors are available. One monitor, based on a technique described in the early 1970s by Penáz, incorporates an inflatable finger cuff with an infrared photoplethysmograph devised to measure the blood volume of the finger artery under the cuff. With each heartbeat, the blood volume in the digital artery increases during systole and decreases during diastole. Because the pressure in the cuff is kept equal to that inside the artery, the vessel wall is considered to be unloaded (i.e., its transmural pressure is zero). This method is also known as the vascular unloading technique. This mean arterial set-point is then maintained by small adjustments to the cuff volume; the size of the adjustments is determined by changes in arterial wall pressure as detected by a computer. This principle is the basis for the beat-by-beat measuring of blood pressure.

Another method of noninvasive continuous blood pressure monitoring is based on arterial applanation tonometry. A superficial artery is flattened between its supporting bone and an externally placed transducer. The transducer senses the pulse, the maximal pulse amplitude, and the widest pulse pressure. These measurements are then calibrated to a previously recorded oscillometric brachial artery pressure measurement, and continuous calibration and measurement of blood pressure are obtained. Early research has shown that these noninvasive monitors are accurate; however, further studies are needed to compare data with standards and to validate the use of these monitors in the clinical setting.

Electrocardiogram

The contraction of the heart muscle is associated with electrical changes. These changes, or depolarization and repolarization, can be detected at the surface of the body with skin surface electrodes that are joined to the electrocardiograph (ECG) by wires. This machine compares the electrical activity in each of the electrodes and forms a picture of the heart from different directions. This picture is displayed in a pattern (the ECG tracing) that is characteristic from each view. This tracing can then be used to analyze, in detail, the electrical activity of the heart.

The ECG is used to monitor heart rate and to detect dysrhythmias, conduction defects, or other alterations in the electrical activity of the myocardium, such as ischemia or electrolyte imbalance. Standard leads I, II, and III are the most commonly used during anesthesia and are excellent for detecting dysrhythmias. Lead II allows detection of ischemia of the inferior wall and also reveals maximal P-wave amplitude for good detection of dysrhythmias. With the addition of lead V, ischemia of the anterior and lateral walls of the left ventricle—the common and deleterious sites of ischemia—can be detected. Therefore a five-lead system is often used to monitor patients undergoing general anesthesia, with which the appearance of dysrhythmias might be anticipated. The ECG allows us to detect myocardial changes and intervene when normal rhythm is crucial; however, the ECG only provides information about electrical activity—it does not give us information about the heart’s mechanical ability to pump and maintain circulation. Therefore the ECG monitor is best used in conjunction with the pulse oximeter for circulatory system monitoring.

For detecting myocardial ischemia, the ECG monitor is essential. Hypoxia and the release of endogenous catecholamine because of pain are common causes of dysrhythmias during sedation. Myocardial ischemia resulting from hypoxia is indicated by depression or elevation of the ST segment. On a normal ECG tracing, the ST segment should be isoelectric, or at the same level as the T wave and the next P wave. If the ST segment is elevated, acute myocardial injury or recent infarction may have occurred. The lead through which elevation is detected indicates the part of the heart that has been damaged. Pericarditis also causes ST elevation and is seen in most leads because it affects the entire heart. Horizontal ST segment depression is usually a sign of ischemia rather than infarction. Downsloping ST segment depression, also indicative of ischemia, may also be associated with digoxin therapy. Horizontal and downsloping ST segment depression is a more ominous sign of myocardial problems than upsloping ST segment depression. T wave changes can also signify ischemia; however, T wave inversion is also seen with ventricular hypertrophy, bundle branch block, and digoxin therapy. T wave inversion is normal in leads III, VR, and V 1 . Electrolyte abnormalities may also be reflected in the ECG tracing. Hypokalemia causes T wave flattening, whereas hyperkalemia causes peaked T waves and may cause widening of the QRS complex. Minor changes may also occur regularly in the ST segment and the T wave; these are considered nonspecific ST-T changes and are usually of no great significance. Although changes in the ST segment and T wave are not specific for ischemia, when these abnormalities are detected during anesthesia, they should be immediately investigated.

RESPIRATORY SYSTEM MONITORING

An inadequate airway during anesthesia contributes significantly to morbidity and mortality. Ventilatory changes resulting from administered sedative medications tend to precede cardiovascular system depression. Respiratory system monitoring is an extremely important aspect of patient care during anesthesia and involves visual methods and the use of monitoring devices. Auscultation of breath sounds during conscious and deep sedation can be performed with a precordial or suprasternal stethoscope. Movement of the reservoir bag (if the patient is undergoing endotracheal, or nitrous oxide/oxygen anesthesia) and visualization of chest excursion should be continually monitored, as should the color of the mucous membranes. However, these observational methods alone are not sufficient for assessing respiratory adequacy. The use of pulse oximetry and capnography has greatly increased the safety of anesthesia care.

Precordial/Pretracheal Stethoscope

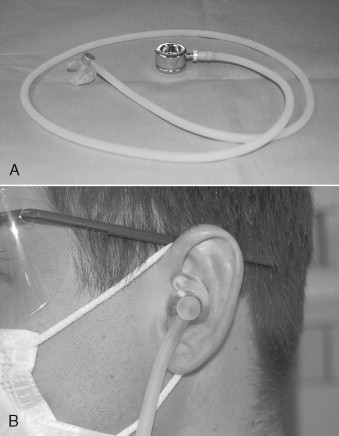

The precordial/pretracheal stethoscope is an inexpensive yet invaluable device for monitoring the circulatory and respiratory systems during anesthesia. The precordial/pretracheal stethoscope consists of a weighted stethoscope head connected via conduction tubing to a custom-molded monaural earpiece ( Figure 2-1 ). Small circular disposable adhesive patches are available for attaching the stethoscope’s head to the skin of the chest or neck. When placed in the precordial region, the stethoscope monitors heart and breath sounds; when placed on the neck over the trachea, it monitors respiratory sounds over heart sounds ( Figure 2-2 ). It could be argued that using the stethoscope in the pretracheal position is ideal for respiratory monitoring during ambulatory anesthesia for oral surgery because the drugs we use commonly in sedation are depressors of respiration with a much lower chance of causing changes in cardiovascular function that would be diagnosed through auscultation. When used in the precordial position, the stethoscope’s head should be placed on the chest wall between the sternal notch and the left nipple for optimal evaluation of respiratory and heart sounds. Studies show that, although the design of the stethoscope has been changed so that it can electrically and selectively amplify and filter heart and breath sounds, the use of the stethoscope in the past decade has decreased dramatically. One limitation of the stethoscope is that, although breath sounds may be audible, the stethoscope cannot determine whether tidal volume is adequate. For this reason, other monitors of oxygenation, such as the pulse oximeter, are added.

Pulse Oximeter

Desaturation of as much as 10% frequently occurs during outpatient oral surgical procedures involving intravenous conscious sedation. In as many as 53% of anesthetized patients, desaturation of more than 10% occurs. Because the body’s oxygen reserves are small, a decrease in SpO 2 can occur quickly. Monitoring oxygenation during delivery of anesthesia is therefore essential, and hypoxemia must be detected quickly. Detection allows restoration of oxygenation before irreversible and potentially life-threatening events can occur. Pulse oximetry is one of the most important advances in the clinical monitoring of arterial oxygen saturation. Before its development, physical assessment and arterial blood gas analysis were used to detect hypoxemia in anesthetized patients. It is difficult to recognize cyanosis by physical examination alone until the deoxyhemoglobin (Hb) level reaches 5g/dL, which corresponds to an arterial oxygen saturation of about 67%. The pulse oximeter is a sensitive continuous monitor of oxygenation and therefore is an important component of our current monitoring protocols.

Oximetry is the optical detection of oxygenated (HbO 2 ) and deoxygenated hemoglobin within blood. The principle behind pulse oximetry is the tenet that the different components of solutions (such as hemoglobins in blood) absorb different amounts of light. By detecting and measuring the absorbed and reflected light, we can assess oxygenation status. Pulse oximeters emit light at two different wavelengths: one at 660 nm, which is within the red band of the light spectrum, and one at 940 nm, which is within the infrared light band. Once the red (R) and infrared (IR) lights have been emitted from an LED source, they pass through a cutaneous vascular bed (such as the finger) and are received by a photodetector that measures the intensity of the transmitted light at both wavelengths. At 660 nm, HbO 2 allows more red light to pass through, whereas Hb absorbs more red light. At the 940 nm wavelength, however, this phenomenon is reversed (i.e., HbO 2 more infrared light than does Hb).

The pulse oximeter circuitry filters the input from the photodetector to focus only on light of alternating intensity, such as that which pulses through an artery. The peak of infrared absorption/red reflection is determined to be the arterial inflow into the capillary bed, and the R/IR ratio calculated. This is then converted to an arterial oxygen saturation level by means of an empiric algorithm that is based on calibration curves derived from studies of induced hypoxemia in volunteers.

The pulse oximeter has become the standard for continuous noninvasive assessment of arterial oxygen saturation, which has even been referred to as the fifth vital sign. Pulse oximetry has the advantage of being accurate. Many studies show that the difference between saturation measurements obtained by pulse oximetry and those obtained by arterial blood gas analysis is insignificant when SpO 2 is higher than 70%. Studies that have determined the accuracy of pulse oximeter measurements have used a co-oximeter for comparison. A co-oximeter transmits four wavelengths of light through blood and is believed to be the gold standard for detection of in vitro saturation. Unlike pulse oximeters, however, co-oximeters cannot provide continuous monitoring.

The pulse oximeter is a noninvasive, continuous, convenient, and inexpensive tool. However, despite its ease of use, the pulse oximeter provides us with data that can sometimes be misinterpreted. It indicates the percentage saturation of arterial blood (SpO 2 ), but is not a direct measure of the partial pressure of oxygen dissolved in blood (PaO 2 ). The relationship of these two is described by the oxygen dissociation curve, but each can be affected by different factors, and therefore the oxygen saturation will not always accurately predict the amount of oxygen actually being delivered to tissues.

Pulse oximetry also has other limitations. There must be a large change in partial pressure of oxygen (a fall below 75 mm Hg) before a change in saturation is sensed by the pulse oximeter. This may be especially problematic when a patient is receiving a high flow of supplemental oxygen. Another limitation is that the pulse oximeter measures peripheral arterial blood saturation rather than central arterial blood saturation. Because the central arterial saturation decreases before the peripheral saturation does, the desaturation detected by the pulse oximeter is a late sign. Because the pulse oximeter measures saturation in peripheral arteries, its accuracy is also affected by hypoperfusion, as is the case with cold extremities, peripheral vascular disease, hemodynamic instability, or extremity elevation.

Potential technical sources of error in pulse oximetry measurements include interference by ambient light, motion artifact, malpositioning, and sources of electromagnetic radiation, such as cellular phones and electrocautery devices. Inherent in the design of the machine is an additional delay in the detection of hypoxia. The measures received by the photodetector are signal averaged over several seconds; thus, the pulse oximeter may not detect hypoxemia until after a considerable delay has occurred.

Other sources of error in pulse oximetry readings may be patient related. Some conditions that may affect the accuracy of pulse oximetry are several forms of dyshemoglobinemias. In addition to reduced and oxygenated hemoglobin, blood contains carboxyhemoglobin (HbCO) and methemoglobin (MetHb). Although normally present in very small amounts, these dyshemoglobins can influence the determination of oxygen saturation because they do not carry oxygen but have absorption properties similar to those of HbO 2 and Hb. When HbCO or MetHb are present in higher quantities, they interfere with the absorbance ratio of reduced hemoglobin and HbO 2 and cause an inaccurate sum of Hbs, thus resulting in inaccurate SpO 2 readings. For nonsmokers HbCO levels will be less than 2% ; for smokers HbCO levels can be 20% higher than normal; and for patients who have been exposed to carbon monoxide, these levels can be 40% higher than normal. Because HbCO and HbO 2 absorb the same amount of 660 nm light, arterial desaturation may occur even though the pulse oximeter maintains a falsely high reading. In one study, SpO 2 remained at 96%, although the HbCO was 44% because of carbon monoxide poisoning.

Normal MetHb levels are ordinarily less than 1%. Methemoglobinemia can be congenital or acquired; acquired cases are usually due to exposure to prilocaine, benzocaine, sulfa drugs, and nitrites. Because MetHb absorbs light at both 660 nm and 940 nm, the R/IR ratio is once again erroneous, causing the calibrated saturation to read approximately 80% to 85% depending on the percentage of MetHb present. In short methemoglobinemia can cause persistently low saturation recordings even though functional saturation is normal.

Because co-oximetry uses four wavelengths of light, it can measure both MetHb and HbCO and can confirm that an inaccuracy in pulse oximetry readings is due to the presence of these dyshemoglobins. The validity of pulse oximetry measurements in patients with sickle cell disease is questionable. Some research has shown that pulse oximetry generally overestimates oxygen saturation but that these overestimates are not serious enough to cause misdiagnosis of hypoxemia as normoxemia. Studies have also shown that pulse oximetry underestimates oxygenation in patients in vaso-occlusive crisis.

Anemia in the presence of hypoxia may also adversely affect the accuracy of pulse oximetry measurements. Research reveals that at a saturation below 80%, the SaO 2 reading may be falsely low for anemic patients. Studies have also shown that Hb concentrations lower than 5 g/dL cause a falsely low SaO 2 in the presence of hypoxemia.

Findings about the effect of nail polish on pulse oximetry measurements are inconclusive. Although red nail polish appears to have no effect on readings, green, black, and blue polishes and artificial nails may cause lower saturation readings. Onychomycosis (nail fungus) and dirt under the nail also appear to affect measurements.

Capnography

Understanding the analysis and measurement of carbon dioxide in respired gases requires a definition of the components of the process. Capnometry is the measurement of CO 2 concentrations during the respiratory cycle. The capnometer analyzes the gases and displays the readings, and capnography is the graphic record of the measured CO 2 concentrations, usually displayed on a monitor screen.

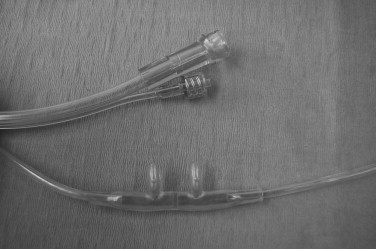

Carbon dioxide analyzers placed along the path of ventilation detect the intensity of infrared light passing across a stream of inhaled or exhaled gas. Respiratory gases are sampled from a line that runs from either the endotracheal tube or a tube connected to the oxygen tubing at the nares of a nasal cannula and are analyzed by mass spectrometry or infrared light to generate a waveform or capnogram ( Figure 2-3 ). The capnogram is often one of many other displayed parameters on a large multipurpose monitor in the operating room; however, there are portable battery-powered end-tidal (ET) CO 2 monitors that are used for monitoring during transport.

Of clinical significance during sedation or general anesthesia are the changes in respired CO 2 that may reveal the onset of a potential complication. Evaluating end-tidal carbon dioxide (ETCO 2 ) levels may aid us in determining the status of the patient’s circulatory, metabolic, and respiratory systems.

CIRCULATION

Exhaled CO 2 concentrations reflect the circulatory status of the patient. A reduction in cardiac output or a reduction in blood flow to and through the lungs causes a reduction in ETCO 2 . An abrupt drop in ETCO 2 can be the result of a decrease in venous return caused by hypovolemia, shock, pulmonary embolism, or cardiac arrest. Although ETCO 2 is a good indicator of circulatory function, administering high doses of epinephrine may cause peripheral vasoconstriction, which increases cardiac output and in turn causes an erroneous increase in ETCO 2 .

METABOLISM

Because CO 2 is a byproduct of cellular metabolism, measurements of CO 2 output may reflect changes in respiration and circulation or altered tissue metabolism. Increases in ETCO 2 indicate increases in the metabolic rate of a mechanically ventilated patient. Conditions, such as hyperthermia, pain, anxiety, acidosis, intense muscle activity (seizures), and reversal of muscle relaxants, can cause an increase in expired CO 2 . Even before temperature increases, a massive increase in CO 2 production occurs in malignant hyperthermia. For early detection of this potentially fatal condition, capnometry is crucial. ETCO 2 will decrease with hypometabolic conditions, such as hypothermia, increased depth of anesthesia, and increased muscle relaxation.

RESPIRATION

Information about a patient’s respiratory status may also be obtained through CO 2 monitoring. In an intubated patient, changes in ETCO 2 may signal inadvertent esophageal intubation, bronchial intubation, extubation, change in resistance of the airway, obstruction, a leak in the endotracheal tube cuff, disconnection, wearing off of the muscle relaxant, or ventilator malfunction. The normal range of ETCO 2 is 40 to 48 mm Hg, with a predictable waveform. During airway obstruction, the ETCO 2 is higher than 48 mm Hg, and the waveform flattens. These changes occur before oxygen saturation decreases. If the patient is breathing spontaneously, a decrease in ETCO 2 or the absence of waveform indicates hypoventilation or apnea. In patients with normal cardiac and pulmonary functions, the alveolar partial pressure of end-tidal CO 2 (PetCO 2 ) and the arterial partial pressure of CO 2 (PaCO 2 ) are directly proportional, unlike the measurements of partial pressure of arterial oxygen (PaO 2 ) and oxygen saturation, which do not correspond linearly.

Before oxygen saturation decreases, a large decrease in arterial oxygenation must ensue. Therefore, ETCO 2 monitoring may allow us to detect anesthesia-related hypoventilation before the pulse oximeter signals a decline in oxygen saturation. In fact many studies in both adults and children have shown this to be true.

Although capnography has the advantage of being a fairly inexpensive, noninvasive adjunct for assessing a patient’s ventilation, it has limitations. Its use during sedation is controversial. Erroneous loss of waveform may occur because of mechanical obstruction (condensation, secretions, or positioning) in patients who are breathing spontaneously. From time to time, the machines go through a purge cycle to clear the line of moisture and secretions, and the waveform is lost during this cycle.

The Joint Commission on the Accreditation of Healthcare Organizations (JCAHO) and the American Academy of Pediatrics advocate capnography as a component of monitoring for sedated children. Other authors, however, do not support the use of capnography as a standard in monitoring during sedation, and they argue that its use neither decreases hypoxic events nor optimizes anesthesia care. Sampling via nasal cannula or nasal hood system is considered to be an open system that allows delivered oxygen or room air to mix with and dilute expired air. Those who do not support routine capnography during sedation claim that the shortcomings of an open system, especially in children where mouth breathing, crying, and breath holding is commonplace, make sampling less accurate. The use of capnography in outpatient oral and maxillofacial surgery anesthesia will most likely be standard of care in the near future.

TEMPERATURE MONITOR

The American Association of Oral and Maxillofacial Surgeons’ (AAOMS) committee on anesthesia guidelines state that temperature should be recorded for all intubated, anesthetized patients and may also be recorded for patients who are not intubated. Whenever patients are anesthetized, a means of providing continuous temperature monitoring should be readily available and should be used when changes in body temperature are anticipated or suspected. While under general anesthesia, a patient loses the normal mechanisms of regulating body temperature. Although continuous temperature measurement in the sedated patient may not be as crucial as measurement in a patient under general anesthesia, whenever a variation from normal is suspected preoperatively it should be evaluated so that the potential negative outcomes of hypothermia and hyperthermia, discussed later in this section, can be prevented.

In pediatric patients, for whom the ratio of surface area to body mass is increased, in patients who are receiving large amounts of intravenous fluid, and in patients who are undergoing major surgery involving a body cavity, a decrease in body temperature is anticipated. Hypothermia may lead to adverse postoperative outcomes, such as prolonged recovery, increased risk of wound infection, and increased cardiac morbidity. As core body temperature increases, the patient’s ability to tolerate stress decreases and the workload of the respiratory and cardiovascular systems increases. Hyperthermia may signal severe physiologic disturbances, such as drug reaction, transfusion reaction, hyperthyroidism, and malignant hyperthermia. Body temperature should be monitored continually when agents that may trigger malignant hyperthermia, such as volatile anesthetics and depolarizing muscle relaxants, are used; however, even if these agents are not being used, a means of monitoring temperature should be at least readily available.

Temperature monitors depend on a large variation in technologies to display accurate measurements. A thermistor is a temperature-sensing element that exhibits a large change in resistance proportional to a small change in temperature. Its advantages include disposable probes, sensitivity to small temperature changes, accuracy, and low cost. Like the thermistor, a thermocouple determines temperature by using an electrical current. It consists of two wires of dissimilar metals welded together. Its advantages are similar to those of the thermistor; however, thermocouples do not reveal small temperature changes as accurately as the thermistor. Both the thermistor and the thermocouple contain a platinum wire whose electrical resistance varies linearly with temperature. Like both the thermistor and the thermocouple, the platinum wire is an accurate, continuous, inexpensive thermometer. All of these thermometers employ a probe that is sealed within a casing to insulate it from the wet environment inside the body.

Liquid crystal thermometers use a system of organic compounds that melt and recrystallize at specific temperatures. They consist of an adhesive-backed strip that is placed on the skin. They are a practical method of temperature monitoring because they are inexpensive, convenient, disposable, noninvasive, and transferable with the patient. They are, however, less accurate than other thermometers, and their readings can be influenced by factors in the environment, such as humidity or the presence of a heating lamp. The infrared thermometer, although it can be used only for intermittent measurements, is accurate, noninvasive, and convenient. It measures the amount of infrared radiation emitted by an object, such as the ear canal, and converts it to a temperature recording. The accuracy of this thermometer is somewhat technique sensitive and depends on the degree of aim and penetration into the ear canal.

Common sites for measurement during routine general anesthesia are the nasopharynx, esophagus, tympanic membrane, oral cavity, rectum, bladder, and trachea. Because of the nature of oral surgical procedures, probes placed into the nasopharynx or oral cavity can become displaced by instrumentation or can relay inaccurate readings because of irrigation or continual disturbance. Because of tolerance issues for patients under sedation, sites, such as skin and axilla, can be used for measurement during procedures performed with the patient under general anesthesia. Skin measurements are frequently taken with a liquid crystal thermometer placed on the forehead. Most of these thermometers are adjusted to reflect core body temperature; because the forehead has good blood flow and little subcutaneous fat, accurate measurements can be obtained.

As is true of all monitors, temperature monitors have limitations. For thermometry the limitations include incorrect readings and potential hazards, such as monitoring site damage from burn or perforation. Faulty probe connections, improper probe placement, and machine failure are potential sources of error. To prevent high readings, internal probes must be kept dry at their connections. Burns from temperature probes acting as a ground for an electrosurgical unit can occur, as can rectal, tympanic membrane, and esophageal perforations caused by temperature probes.

NEUROMUSCULAR TRANSMISSION MONITOR

Some of the primary uses of muscle relaxants during anesthesia are to facilitate endotracheal intubation, eliminate patient movement, provide relaxation of respiratory muscles, and create a more favorable surgical environment. Different degrees of neuromuscular blockade (NMB) may be desirable for each of the above uses. Because of these various states of relaxation and because patients exhibit a large variation in response to muscle relaxants, monitoring the level of NMB is important. The extent of NMB can be assessed by stimulating a peripheral motor nerve via electrical current and measuring the response of the muscles innervated by that nerve. Commonly the ulnar nerve is stimulated at the wrist with a current of 20 to 60 mA while the presence and degree of thumb adduction by the adductor pollicis muscle is monitored. The response of the facial muscles to electrical stimulation reflects that of airway musculature and is a good indicator that NMB is adequate for intubation. Stimulating the facial nerve may be ideal for determining intubation readiness; however, the response of the adductor pollicis is usually adequate.

During induction of anesthesia, the nerve stimulator is used to determine whether the laryngeal muscles are sufficiently relaxed to allow passage of the endotracheal tube through open vocal cords. During maintenance of anesthesia, NMB should be deep enough to prevent patient movement but not so deep that postoperative respiratory support is needed. At the end of the procedure, the nerve stimulator allows the anesthetist to determine whether NMB is reversible and, if so, the degree of that reversal. At this point, monitoring with a peripheral muscle, such as the adductor pollicis , is ideal because this muscle is one of the last to recover. Thus recovery in this muscle will most likely indicate recovery in the respiratory muscles.

Patterns of stimulation and response are commonly delivered as a single twitch, train-of-four (TOF), or tetanus. When compared with control values, the results achieved by single-twitch stimulus can be used to identify intubation readiness. As the block progresses, the twitch response diminishes. However, the depression of the response is the same with depolarizing and nondepolarizing muscle relaxants. Thus the use of single-twitch stimulus will not allow the anesthetist to distinguish between depolarizing and nondepolarizing blocks.

The TOF pattern of stimulation consists of four single and equal twitches delivered over a period of about 2 seconds and does allow differentiation of depolarizing and nondepolarizing blocks. A depolarizing muscle relaxant will cause equal depression of the height of all four twitches. A nondepolarizing muscle relaxant will cause progressive fading among the twitches until deep blockade is established and the fourth twitch is eliminated, followed by the third, and so forth. TOF stimulation is advantageous because it requires no control response and is a more sensitive monitor of NMB than the single twitch.

Tetanic stimulation involves repeated single twitches, usually on the order of 30 to 100 stimuli per second. With no NMB, sustained contraction of the muscle is seen. With depolarizing drugs, the tetanic contractions are sustained but depressed uniformly; with nondepolarizing drugs, the tetanus is both depressed and not sustained. The advantage of tetanic stimulation is that deeper levels of NMB may be monitored when a single twitch or TOF fails to produce a response. The disadvantage is that it is painful and can only be used when the patient is anesthetized.

DEPTH OF SEDATION

Assessment of depth of sedation is necessary for providing a good experience for the patient while maintaining patient safety. Sustaining an adequate level of amnesia, analgesia, and anxiolysis should be balanced with preventing respiratory depression, laryngospasm, and cardiac problems, such as hypotension and dysrhythmias.

Subjective measures (scales) include clinical scoring systems that can evaluate a patient’s level of sedation; however, the use of such scales requires waking or disturbing patients repeatedly so that their level of responsiveness can be determined. These scales are easy to use and are indicated whenever sedative medications are given. Although many sedation scoring systems exist, one commonly used subjective scale is the Ramsay Sedation Scale. This was the first established scale for determining arousability in sedated patients. It scores sedation at six levels based on the patient’s ability to respond. The requirement of response, however, means that the Ramsay Sedation Scale cannot be used for patients undergoing NMB ( Box 2-2 ). Other clinical assessment tools are the Observer’s Assessment of Alertness/Sedation Scale, the Riker Sedation-Agitation Scale, and the Motor Activity Assessment Scale. These scales measure the degree of alertness of patients given sedative medications. Factors limiting the use of such scales include their reliance on individual interpretation of definitions and measurement criteria.

- 1.

Patient is anxious and agitated or restless, or both.

- 2.

Patient is cooperative, oriented, and tranquil.

- 3.

Patient responds to commands.

- 4.

Patient is asleep but with brisk response to light glabellar tap or loud auditory stimulus.

- 5.

Patient is asleep with sluggish response to light glabellar tap or loud auditory stimulus.

- 6.

Patient is asleep with no response.

Stay updated, free dental videos. Join our Telegram channel

VIDEdental - Online dental courses