Introduction

In this study, we aimed to give insight into the article review process by investigating the characteristics and the fate of manuscripts submitted to the American Journal of Orthodontics and Dentofacial Orthopedics ( AJO-DO ).

Methods

The following information was obtained for original articles submitted to the AJO-DO in 2008: (1) for rejected articles: the reasons for rejection and the journal of subsequent publication when applicable; (2) for accepted articles: the number of revisions and the time elapsed to publication; and (3) for all articles: study topic, study design, area of origin, and statistically significant findings. Findings were reported using descriptive statistics, the chi-square test for equality of proportions, and multiple regression where appropriate. Post-hoc pair-wise tests were checked against the Bonferroni correction to account for multiple testing.

Results

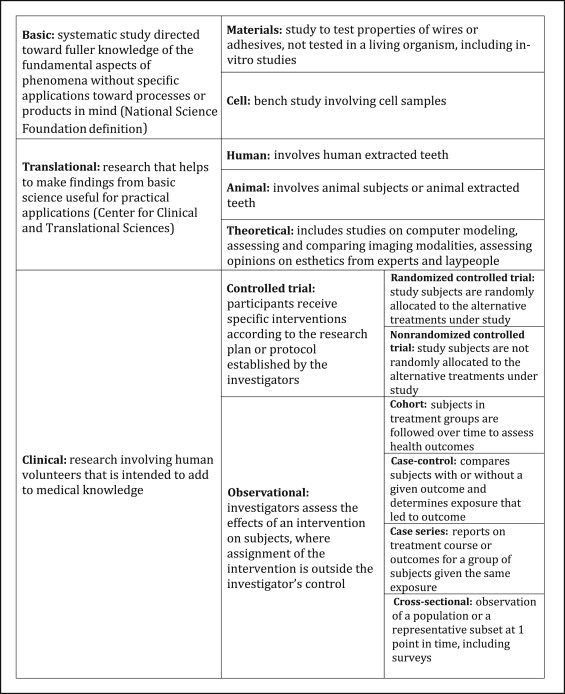

Of the 440 original articles submitted to AJO-DO in 2008, 116 (26%) were accepted and published an average of 21 months (SD, 5 months) after acceptance. Rejected articles totaled 324 (74%), with 137 (42%) finding subsequent publication an average of 22 months (SD, 11 months) after rejection by the AJO-DO . The top 3 reasons for rejection by the AJO-DO were (1) poor study design (59% of rejected articles), (2) outdated or unoriginal topic (42%), and (3) inappropriate for the AJO-DO ‘s audience (27%). Manuscripts rejected for poor study design had the least success for subsequent publication, whereas those rejected as inappropriate for the AJO-DO had the highest rate of publication elsewhere. Area of origin was significantly associated with acceptance by the AJO-DO , with articles from United States and Canada most likely to be accepted ( P < 0.01). Articles from countries with the lowest publication rate in the AJO-DO had the highest publication rate elsewhere. The presence of statistically significant findings was shown to be significantly associated with acceptance by the AJO-DO ( P = 0.013) but not with publication elsewhere ( P = 0.77).

Conclusions

Rejection by the AJO-DO does not preclude publication elsewhere, although articles rejected for poor study design were least likely to be eventually published. Many publishable articles are rejected by the AJO-DO as inappropriate for its readership, and these were the most likely to find publication elsewhere. Articles with the highest chance of acceptance by the AJO-DO were those from the United States and Canada and those reporting statistically significant results.

Highlights

- •

This study explored the characteristics and dispositions of manuscripts submitted to the AJO-DO.

- •

Articles were usually rejected for more than 1 reason.

- •

The 3 reasons for rejection cited most often were poor study design/small sample size, similarity to articles already published, and topic inappropriate for AJO-DO audience.

- •

Articles from the U.S. and Canada had the most publishing success in the AJO-DO .

- •

Authors are advised to submit to an appropriate journal, use a well-designed and well-described study with adequate sample sizes, and emphasize the novelty and relevance of their work.

While there exists among researchers a consensus regarding proper study design and scientific reporting, even the most seasoned authors see their work rejected periodically. Understanding the reasons for a manuscript’s rejection may help authors identify areas needing improvement, while editors can use this information to more clearly communicate their expectations to authors and reviewers. The medical literature includes many studies examining the main reasons for manuscript rejection, mostly in editorial form. In the dental literature, there are considerably fewer studies on this topic, although both medical and dental articles emphasize many of the same reasons for rejection. These reasons include absence of novel findings, irrelevance to a journal’s scope, flawed study design, and poor English and grammar.

Few investigations in either the medical or dental literature have compared specific characteristics between accepted and rejected articles, such as country of origin, statistically significant results, and study topic, which could potentially reveal sources of bias in the peer-review process. Regarding the effects of statistically significant findings, many have explored publication bias, which is a review committee’s tendency to publish articles with positive findings or an author’s preference to write and submit articles with positive findings. Koletsi et al examined the contents of 5 top orthodontic journals and found that 75% to 90% of published studies contained statistically significant results. Lee et al, in a study published in Clinical Experimental Ophthalmology , asserted that statistically significant results do not affect publication. An article in the Journal of the American Medical Association raised the question of whether a submission without significant results is more likely to be rejected or whether the majority of submitted articles have statistically significant results. The relationship between study topic and manuscript rejection has not been explored to great depth in the medical or the dental literature.

Another area of interest regarding rejected articles is their ultimate fate after initial rejection. Studies in the medical literature have reported that rejected articles are usually subsequently published in journals with lower impact factors than the journal to which they were initially submitted. The impact factor of a journal for a particular year is defined as the number of citations from that journal from the previous 2 years divided by the total number of articles published in those 2 years. Journals are assigned an impact factor in Journal Citation Reports , published by Thompson Reuters. A journal with a high impact factor is usually judged as higher in quality, although using the impact factor as a measure of journal quality has its limitations. A journal’s article rejection rate may also be used to measure journal quality, assuming that a higher-quality journal will have a higher article rejection rate. However, neither impact factor nor rejection rate is a definitive measure of journal quality, and one does not necessarily influence the other. The time to subsequent publication varies greatly among articles, but most medical studies showed that articles were published within 3 years of initial rejection. The dental literature lacks information on the fate of rejected articles.

In this study, we looked at original manuscripts submitted to the American Journal of Orthodontics and Dentofacial Orthopedics ( AJO-DO ) in 2008 and aimed to give descriptive statistics about accepted and rejected articles, as well as to examine the interactions among manuscript characteristics and acceptance, rejection, and subsequent publication. The AJO-DO was deemed an appropriate journal to investigate, as American orthodontists regard it as the premier purveyor of clinical advances in orthodontics. It receives a wide variety of submissions from around the world and is appreciated by an international audience. Its impact factor is the highest among the orthodontic journals, with a 5-year impact factor of 1.924.

Material and methods

This study was carried out with the approval of the University of Washington’s Human Subjects Division (application number 42908). To obtain the data sample, access was granted by the editor of the AJO-DO in 2012 to search its electronic archives for original articles submitted between January 1, 2008, and December 31, 2008; this yielded 461 articles for use in this study. The database included the abstracts of the submitted articles but not full manuscripts.

Data collection

For each manuscript included in the study, the following information was recorded when applicable: AJO-DO manuscript number, corresponding author’s name, date submitted, date of final AJO-DO decision, days elapsed to AJO-DO decision, study topic classification (according to the AJO-DO submission form), number of revisions, type of revisions, AJO-DO publication month and year, days elapsed to publication in the AJO-DO , reason for rejection, country of origin, presence of statistically significant findings, study design, final fate (published in the AJO-DO , published elsewhere, or not published), title of journal of subsequent publication, and month and year of subsequent publication.

Two investigators carried out the data collection, with investigator 1 (N.F.) collecting data for half of the articles and investigator 2 (J.F.) collecting data for the other half. For study design and reason for rejection, both investigators determined these data independently for all articles, and then intrarater and interrater reliabilities were determined by comparing their findings for 100 consecutive articles and reporting the reliability as the percentage of agreement among those articles. When the 2 investigators differed, a consensus was reached through review and discussion. In the particular case of determining the study design for articles appearing to be controlled trials, the final determination was made after discussion with a third investigator with many years’ experience as an associate editor for the AJO-DO .

Article disposition

When an article is submitted to the AJO-DO , it can receive one of several review decisions: (1) accept without revision, to be published after copyediting; (2) return for minor revisions because some changes are needed in the text to justify the technique or method used, retreat the data, or add a figure or table; (3) return for major revisions to reanalyze data, repeat part of the experiment with an alternative method, replace or eliminate inappropriate elements including figures, reorganize tables, or follow journal submission guidelines for references, figures, submission elements, and so on; (4) reject but submit to another journal because the article has merit, but the topic would be of limited interest to AJO-DO readers or has been recently covered in the AJO-DO ; (5) reject after review because a team of reviewers determined that there are fundamental errors in the design or the sample, inappropriate methods, or confounding variables that were not detected; (6) reject after an unsatisfactory revision because a team of reviewers determined that the revisions did not meet the requirements for publication in the AJO-DO ; or (7) reject without review because the editor determined that the article was fundamentally flawed or inappropriate for the AJO-DO audience.

For this study, an accepted article was defined as having received a decision of “accept without revision” or was accepted after resubmission with revisions. A rejected article was defined as having received a decision of “reject but submit to another journal,” “reject after review,” or “reject without review.” Articles that were returned to authors for revisions but not resubmitted to the AJO-DO were excluded from the study.

Study topic

The main topic of each article was determined from the topic classifications chosen by the authors during the AJO-DO submission process. If more than 1 topic was chosen for a manuscript, the main topic was determined by using the context given in the article’s abstract. Because of the many possible study topics, only topics with 30 or more observations were included in the statistical analysis.

Statistical significance

The presence of statistically significant findings was determined by using the article abstracts, with each article placed into 1 of 3 categories: (1) statistically significant findings (abstract includes statements and numbers indicating statistically significant results), (2) null findings (abstract includes statements and numbers indicating that the statistical analysis did not produce a significant result), or (3) no statistical analysis (abstract does not indicate that any statistical analysis was done in the study).

Area of origin

By using the recorded country of origin, articles were grouped into 7 areas of origin for analysis: (1) Asia, (2) Australian continent, (3) Eastern Europe (former Soviet Bloc countries), (4) Latin America, (5) Middle East and Africa (including Turkey), (6) the United States and Canada, and (7) Western Europe.

Study design

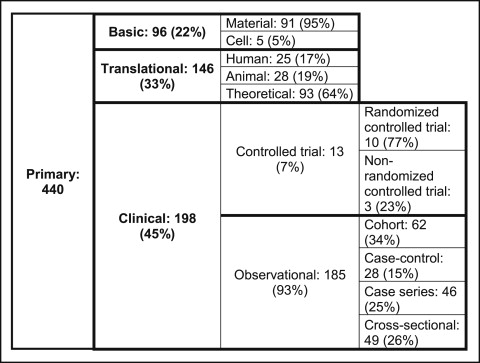

The article abstracts were also used to determine study design. Figure 1 shows how articles were grouped in different study design types and gives the definitions used to guide the determination of the study design.

Reason for rejection

The main reason for rejection was determined by reading the editor’s notes in the database as well as reading the reviews submitted by the reviewers. Given that more than 1 reason was usually given for rejection of an article, the investigators recorded 1 or 2 main reasons based on what was most commonly emphasized by the reviewers and the editor.

Publication elsewhere

To determine whether a rejected article was eventually published elsewhere, the title of the article and the corresponding author’s name were used to search PubMed, Scirus, Scopus, and Google Scholar. Article abstracts were compared when an article may have been subsequently published with a modified title. If the article was not found by any of these search methods, it was categorized as “not published.” If an article was published elsewhere, the name of the journal of eventual publication as well as the month and year of publication were recorded.

A list of all the journals of subsequent publication was made, and the 2010 impact factor (if available) was recorded for the journals that appeared most frequently on the list. The impact factor for 2010 was chosen for comparing journals, because the overwhelming majority of articles submitted in 2008 were published in AJO-DO or elsewhere in 2010.

Statistical analysis

Descriptive statistics were used to report the characteristics of accepted and rejected articles according to the data collected for each article as outlined in the previous section. Associations between article variables (study topic, statistical significance, area of origin, and study design) and outcomes (acceptance by the AJO-DO or publication elsewhere) were explored and reported by using the chi-square test for equality of proportions in situations involving large sample sizes and the Fisher exact test when 5 or fewer articles had any particular combination of characteristics and outcomes. Significance was assigned at the P < 0.05 level. When statistical significance at the 0.05 level was observed for any factor having more than 2 levels, post-hoc pair-wise tests were performed and then checked against the Bonferroni correction to account for potential type 1 error caused by multiple testing. Odds ratios (ORs) and 95% confidence intervals (CIs) were also calculated for pair-wise comparisons.

Because many articles had more than 1 reason for rejection, multivariate logistic regression was used to examine the association between each reason and the outcome “published elsewhere.” Adjusted ORs and 95% CIs were calculated for each reason for rejection.

Results

For study design, the intrarater reliabilities were 97% for investigator 1 and 96% for investigator 2. The interrater reliability for study design was 97%. For reasons leading to rejection, the intrarater reliabilities were 95% for both investigators. Their interrater reliability for the reason for rejection was 92%.

Of the 461 original articles submitted to the AJO-DO in 2008, we excluded 21 from the study: 2 case reports submitted as original articles, 15 articles not returned after revision requests, and 4 articles that were rejected and then resubmitted to the AJO-DO after 2008 and accepted for publication.

After the exclusions, the final sample size was 440 original articles, whose disposition is presented in Figure 2 . The percentages of articles given each final review decision are as follows: 21%, reject without review; 27%, reject after review; 25%, reject but submit elsewhere; 17%, accept after major revisions; 9%, accept after minor revisions; and 1%, rejected after unsatisfactory revision.

Study topic

Table I shows the distribution and the fate of all submitted articles among the main study topics. A chi-square test of equality of proportions found that among the 6 most common topics, study topic was not significantly associated with acceptance by the AJO-DO ( P = 0.18). Similarly, statistical tests for rejected articles showed that study topic was not associated with publication elsewhere ( P = 0.057).

| Study topic | Submitted (N = 440) | Accepted (n = 116) n (%) |

Rejected (n = 324) | |

|---|---|---|---|---|

| Published elsewhere (n = 137) | Not published (n = 187) | |||

| Appliances | 54 | 10 (19) | 17 | 27 |

| Biostatistics | 3 | 1 (33) | 0 | 2 |

| Bonding | 56 | 14 (25) | 22 | 20 |

| Business | 8 | 1 (13) | 4 | 3 |

| Dental anomalies | 12 | 4 (33) | 2 | 6 |

| Diagnosis and treatment planning | 70 | 15 (21) | 27 | 28 |

| Digital technology | 4 | 1 (25) | 1 | 2 |

| Evidence based | 3 | 1 (33) | 1 | 1 |

| Growth and development | 32 | 7 (22) | 13 | 12 |

| Histology | 13 | 0 (0) | 7 | 6 |

| Imaging | 41 | 17 (41) | 4 | 20 |

| Indexes and public health | 11 | 3 (27) | 4 | 4 |

| Lasers | 1 | 0 (0) | 1 | 0 |

| Materials | 23 | 6 (26) | 7 | 10 |

| Periodontal | 1 | 0 (0) | 1 | 0 |

| Pyschological and social | 18 | 8 (44) | 4 | 5 |

| Surgery | 5 | 1 (20) | 1 | 3 |

| TMD and function | 5 | 1 (20) | 2 | 2 |

| Treatment and biomechanics | 80 | 26 (33) | 18 | 36 |

Statistical significance

The great majority of original articles submitted to the AJO-DO in 2008 reported significant findings, and those articles had a higher rate of acceptance than did articles without significant findings and those without statistical analysis ( Table II ). The odds of acceptance for articles with statistically significant findings were 3.5 times higher than for articles that included statistical tests but no findings of significance ( P = 0.013; OR and 95% CI = 3.5 [SD, 1.2 and 10.1]). Among the rejected articles, the rates of publication elsewhere did not differ significantly between those with and those without significant findings ( P = 0.77).

| Statistical significance | Accepted (%) (n = 116) |

Rejected (%) (n = 324) |

All (%) (N = 440) |

|

|---|---|---|---|---|

| Published elsewhere (n = 137) | Not published (n = 187) | |||

| Significant findings | 94 (29) | 98 (30) | 137 (42) | 329 (75) |

| No significant findings | 4 (10) | 16 (41) | 19 (49) | 39 (9) |

| No statistical analysis | 18 (25) | 23 (32) | 31 (43) | 72 (16) |

Area of origin

The 440 original articles included in this study came from 43 countries. The disposition of articles from each area of origin is shown in Table III . Area of origin was found to be significantly associated with acceptance ( P <<0.01). Post-hoc pair-wise tests indicated statistically significant differences in the rates of acceptance between 3 pairs, with corresponding ORs and 95% CIs: Asia and Middle East/Africa: 3.5 (1.6, 7.7); Latin America and the United States/Canada: 7.4 (3.4, 15.0); and Middle East/Africa and the United States/Canada: 8.2 (3.5, 18.0).

| Area of origin | Accepted (%) (n = 116) |

Rejected (%) (n = 324) |

All (%) (N = 440) |

|

|---|---|---|---|---|

| Published elsewhere (n = 137) | Not published (n = 187) | |||

| Asia | 29 (30) | 31 (32) | 37 (38) | 97 (22) |

| Australia | 1 (50) | 0 (0) | 1 (50) | 2 (>1) |

| Eastern Europe | 3 (43) | 3 (43) | 1 (14) | 7 (2) |

| Western Europe | 20 (30) | 25 (38) | 21 (32) | 66 (15) |

| Latin America | 11 (12) | 27 (29) | 54 (59) | 92 (21) |

| Middle East and Africa | 10 (11) | 41 (45) | 41 (45) | 92 (21) |

| US and Canada | 42 (50) | 10 (12) | 32 (38) | 84 (19) |

According to the Bonferroni correction, P values had to be below 0.05/15 = 0.0033 to be considered significant.

Area of origin was also significantly associated with publication elsewhere ( P = 0.007). This statistical analysis was performed by excluding Australia and Eastern Europe, which only had 1 rejected article each. In this sample, rejected articles from Western Europe were the most frequently published elsewhere, whereas those from the United States and Canada were the least frequently published elsewhere. Post-hoc pair-wise tests failed to show a significant difference among any pairs with a Bonferroni-corrected P value of 0.05/10 = 0.005.

Study design

Figure 3 shows the number of articles in each category of study design. No aspect of study design was found to be significantly associated with acceptance. Among the rejected articles, the only significant relationship between study design and publication elsewhere was in observational studies, where the following rates of publication elsewhere were observed: cohort, 36%; case control, 35%; case series, 21%; and cross-sectional, 66%.